.png)

.png)

.png)

.png)

.png)

On 19 January 2026, Department for Education published its updated Generative AI Product Safety Standards at the UK AI for Education Summit. The same week, OECD Director for Education and Skills Andreas Schleicher spoke at the UK Government Summit on Generative AI and posed the question that sits behind everything that follows here:

“Generative AI did not knock politely on the schoolhouse door. It came in through the Wi-Fi and the question every system now faces is: Will AI drift into classrooms by accident, or will we govern it be design?”

Since publication, misconceptions have spread about what these standards require of schools. Some myths create unnecessary paralysis. Others provide dangerous false reassurance. The ten most common myths we hear are:

- “The DPIA is the supplier’s problem, not ours”

- “If the supplier says it’s safe, we don’t need to check”

- “The AI tool has built‑in filters, so we’re covered”

- “The tool detects safeguarding concerns automatically,so staff don’t need to monitor”

- “These standards only apply to education‑specific AI tools”

- “The standards mean we basically can’t use AI in schools”

- “The standards are just guidance, they carry no weight”

- “These are just about data protection”

- “No tool meets all these standards, so there’s no point trying”

- “We have to be fully compliant immediately or we’re breaking the law”

With this in mind, Good Future Foundation seeks to address them with direct reference to what the DfE standards say and broadens the conversation with international perspectives from UNESCO, the OECD and the EU AI Act that the DfE standards alone do not fully capture.

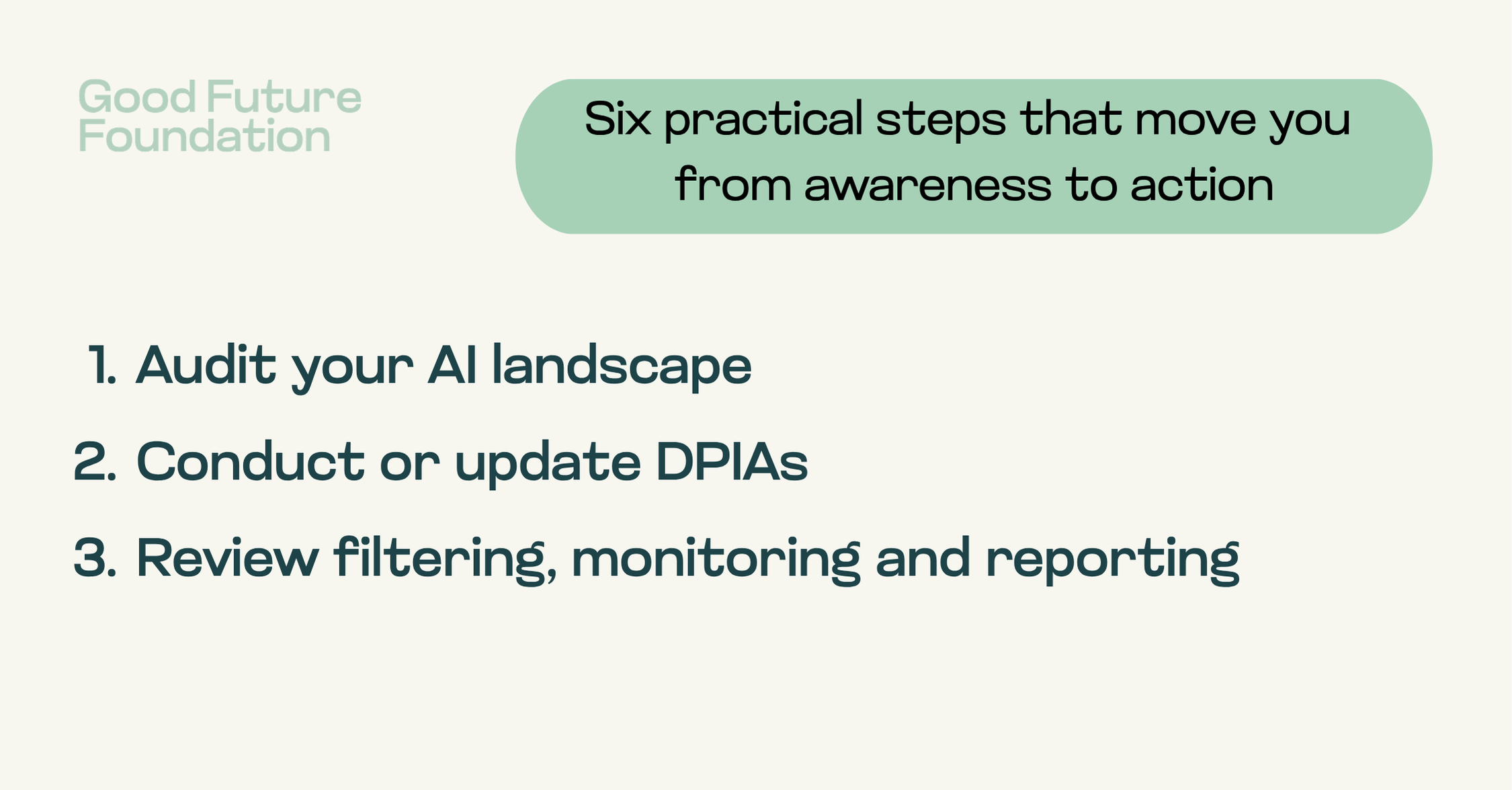

We have also suggested six practical steps for what schools should be doing now in response to these standards. Looking forward to sharing them in our in-person professional development training.